By Xiang Yu, formerly a graduate fellow in strategic foresight with KnowledgeWorks and a doctoral student in philosophy

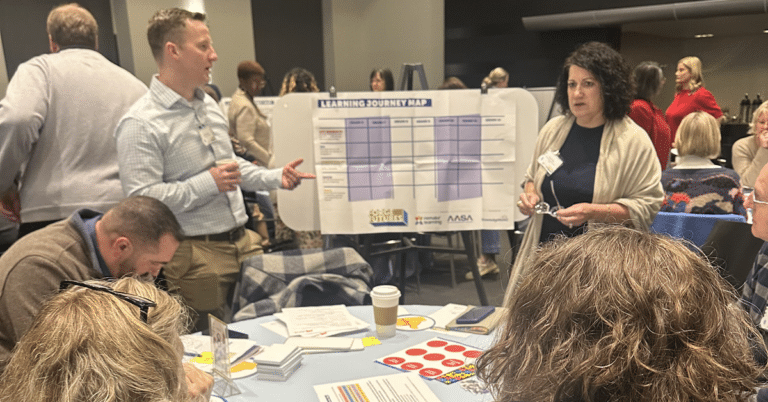

Families and organizations use various kinds of technology to assist the development of children, but it is not always clear whether the use of technology is ethical when ethical boundaries do not yet exist or are not clear. In our recent early childhood forecast, Foundations for Flourishing Futures: A Look Ahead for Young Children and Families©, we recommend that families and organizations consider the ethical implications of using technology.

Foundations for Flourishing Futures

The use of technology can create problems for children. How can different moral frameworks be adopted to help us think about the ethics of technology?

Ethical problems raised by technology

Despite their advantages, technologies that are intended to assist the development of children have created problems that have sometimes undermined this goal. Excessive screen time, in particular, has contributed to childhood health problems such as obesity, headaches, eye problems and sleep problems. It has also caused children to have reduced interaction with their parents and with other children. For example, in a traditional learning setting, it is easy for children to talk with parents or caregivers while reading stories or playing games together. However, when children read from an e-book or play digital games, interactions with their parents or caregivers become more difficult. Without being able to engage with others in a social setting, children cannot learn to invite others’ perspectives and to cultivate social emotions such as empathy.

Violation of children’s privacy is another issue that is often considered morally problematic. Tech companies have been collecting data on children through smart devices such as smart speakers and WIFI-powered toys and gaming apps. In some cases, parents are responsible for violating their children’s privacy, too. For example, many parents share private information about their children on social media before their children are old enough to give consent. Sharing information such as a child’s name, date of birth and their mother’s maiden name can leave the child vulnerable to identity theft after they turn 18.

In addition, algorithms that are designed to make predictions can create moral dilemmas. For example, Los Angeles County’s Approach to Understanding Risk Assessment project used an algorithmic tool that was once on its way to identifying children who were at risk of abuse. The goal was to use predictive analytics to help take children who were being abused out of abusive environments without mistakenly taking children who were not abused out of healthy environments. But every algorithm has a false positive rate that is higher than zero. In this case, that meant that some children would be removed from families that were not abusive if the county’s Department of Children and Family Services followed the recommendations of the algorithm. The question is: should we use an algorithm that we know in advance will wrongfully remove children from their homes at least a small percentage of the time?

Assessing the ethics of technology using moral frameworks

Ethical theories give us frameworks for thinking about how we should act in particular situations. They help us consider what is the morally right thing to do. Since consequentialism and deontology are the two mainstream ethical theories in philosophical literature, let’s focus on them.

According to consequentialism, we are morally obligated to choose whichever course of action available to us that maximizes overall positive consequences. According to deontology, we should not violate moral rules even when violating them maximizes positive consequences. In this theory, intention or motive of the agent, rather than by the outcomes of her actions, determine the moral rules.

To illustrate how these theories might be applied to the ethics of technology, take children’s privacy for example. From a consequentialist point of view, children’s privacy can be violated, as long as the violation maximizes overall positive consequences. For example, if using a smart device brings so much benefit to a family that it outweighs the harm brought by any violation of children’s privacy, then the violation of children’s privacy is justified. If the harm outweighs the benefit, then the violation of children’s privacy is morally wrong. In contrast, from a deontological point of view, if it is a moral rule not to violate anyone’s privacy, then using smart devices that violate children’s privacy is forbidden, even if doing so generates more positive consequences than negative ones.

For parents and educators who want to use technology to assist the development of children but who worry about the ethics of technology, it can be helpful to recognize ethical frameworks such as consequentialism and deontology that are often implicit in our thinking. Using these ethical frameworks to think about how to use technology will guide us in deciding what to do in a particular situation. They will also help us appreciate the complexity of the ethical issues posed technology use and will guide us towards better answers.